Crawl Budget: The Unsung Hero of SEO Optimization (Guide for 2024)

Crawl Budget is an SEO term.

It’s the number of pages a search engine like Google will visit and index on your website over a certain period. It depends on two things: crawl limit and crawl demand.

For more on optimizing your crawl budget to boost SEO, check out this free guide: A Technical SEO’s Guide to Crawl Budget Optimization.

Why Crawl Budget Matters for SEO

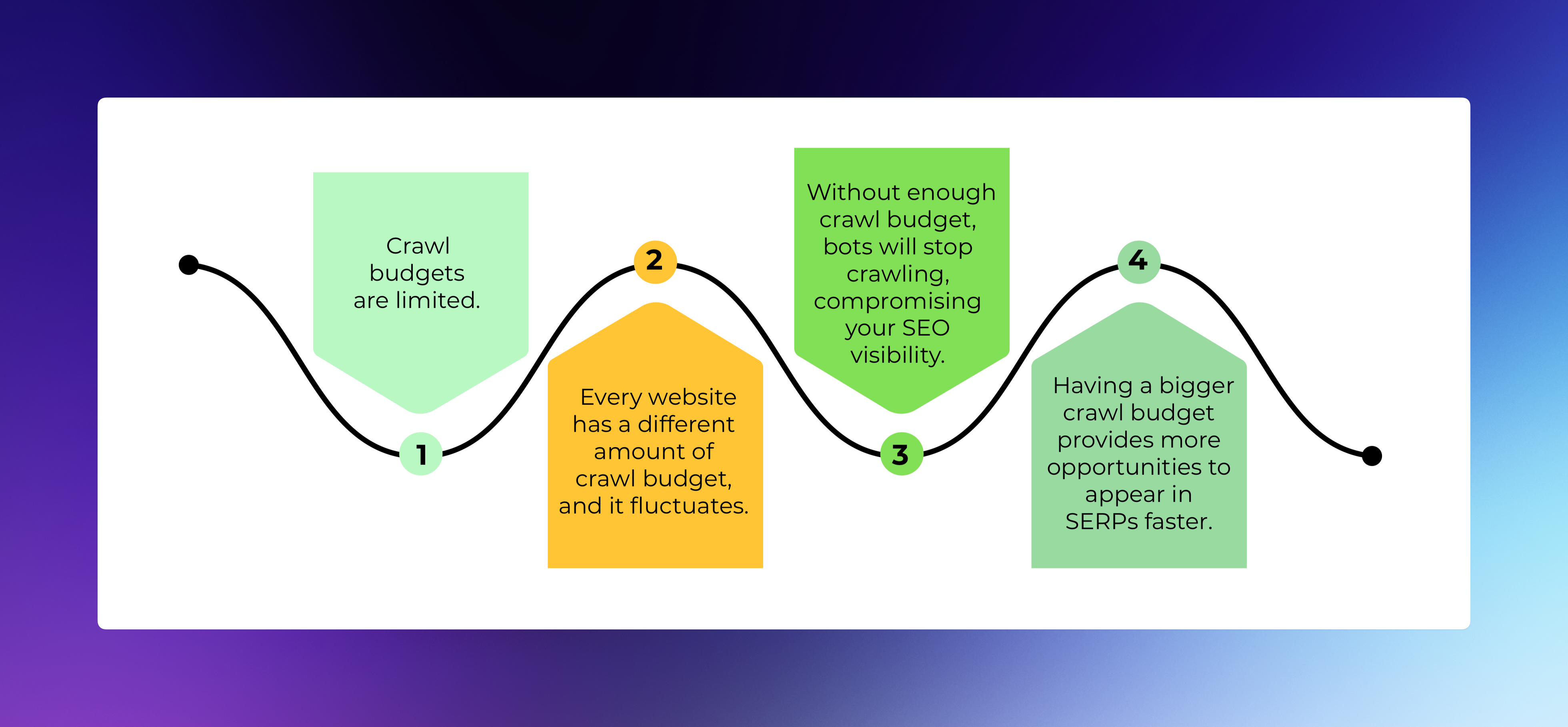

A crawl budget is critical for SEO as it affects how search engines find and index your site’s pages.

If Google doesn’t index a page, it won’t rank in search results. In other words, it won’t be in Google’s database.

Some pages won’t be indexed if your site has more pages than your crawl budget. These pages can be accessed directly but won’t attract search engine traffic.

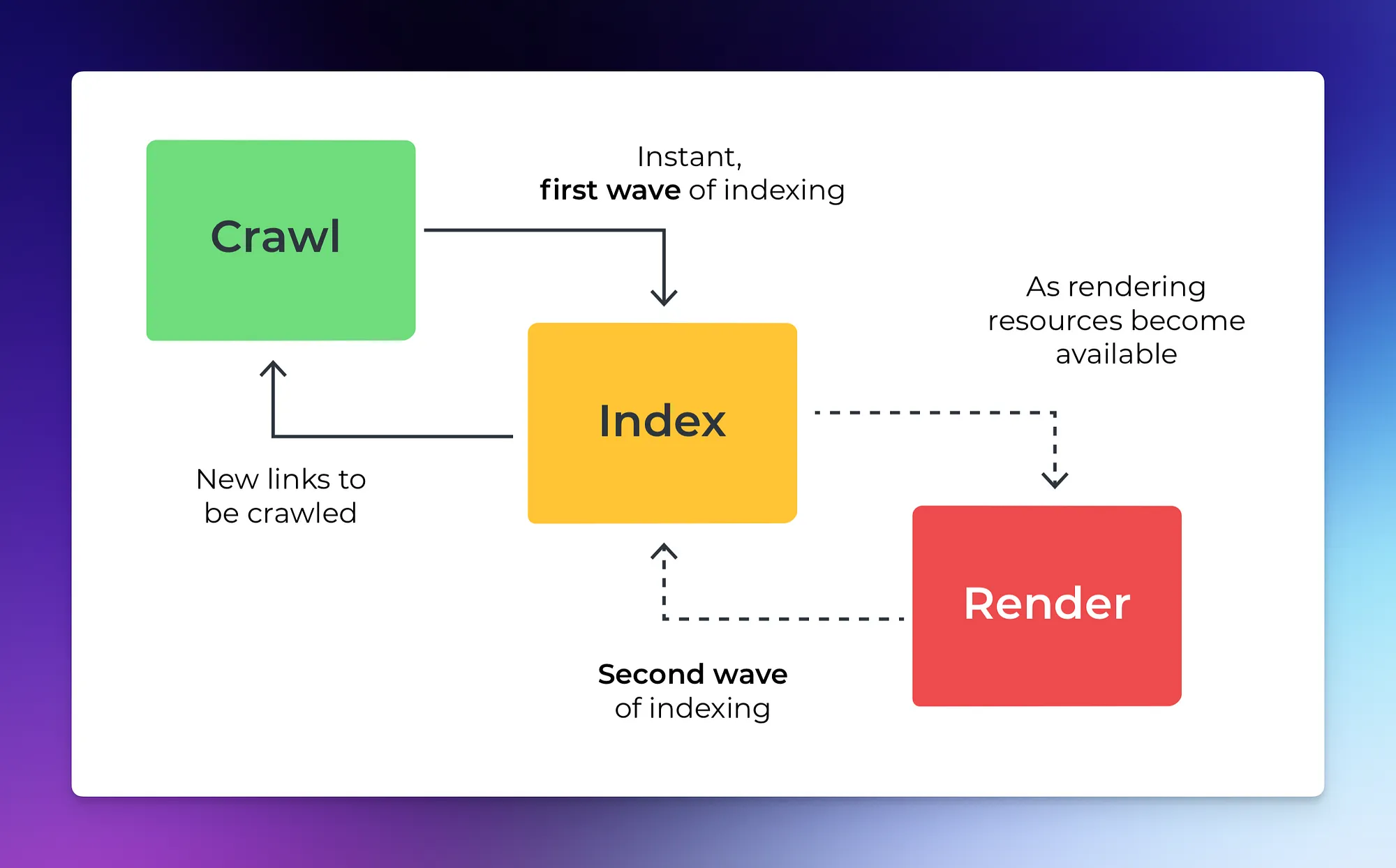

Image source: Prerender.

Most sites don’t need to worry about crawl budget, as Google is efficient at finding and indexing pages.

However, it’s important in these situations:

- Large sites: If your site (like an e-commerce site) has 10k+ pages, Google might only find some of them.

- New pages: If you’ve added a new section with hundreds of pages, ensure your crawl budget can accommodate quick indexing.

- Redirects: Numerous redirects and redirect chains can consume your crawl budget.

Understanding Crawl Budget and Crawl Limit

The crawl limit fluctuates based on several factors:

- Crawl health: If your site responds quickly, the limit increases, allowing more connections for crawling. If your site slows down or returns server errors, the limit decreases, and Googlebot crawls less.

- Limit set in Search Console: You can choose to reduce Googlebot’s crawling of your site.

- Google’s crawling capacity: Google has many resources, but they are not unlimited.

What Determines Crawl Budget?

Google decides the crawl budget. It considers website size, page speed, crawl limit in Search Console, and crawl errors.

Image source: Prerender.

Website structure, duplicate content, soft 404 errors, low-value pages, website speed, and security issues also affect the crawl budget.

Crawl Budget and Crawl Rate

Crawl budget refers to the number of pages a search engine will crawl over a specific time. The crawl rate, however, is the speed at which these pages are crawled.

Simply put, crawl rate is the frequency at which a search engine visits a page or directory within a specific time frame.

How Crawl Budget Impacts SEO Factors

Here’s how crawl budget impacts SEO factors:

- HTTPS Migration: When a site migrates, Google increases crawl demand to update its index with new URLs quickly.

- URL Parameters: Too many URL parameters can create duplicate content, draining the crawl budget and reducing the chances of indexing important pages.

- XML Sitemaps: A well-structured, updated XML sitemap helps Google find new pages faster, potentially increasing the crawl budget.

- Duplicate Content: Sites with lots of duplicate content may get a lower crawl budget, as Google might see these pages as less important.

- Mobile-First Indexing: This is how Google crawls, indexes, and ranks pages based on smartphone user-agent content. It doesn’t directly affect rankings but can influence how many pages are crawled and indexed.

- Robots.txt: Disallowed URLs in your robots.txt file don’t affect your crawl budget. But, using robots.txt helps guide Google bots to pages you want indexed.

- Server Response Time: Quick server responses to Google’s crawl requests can lead to more pages being crawled on your site.

- Site Architecture: A well-structured site helps Googlebot find and index new pages more efficiently.

- Site Speed: Faster pages can lead to Googlebot crawling more of your site’s URLs. Slow pages consume valuable Googlebot time.

Managing Crawl Budget

Effective crawl budget management helps your essential pages get crawled and indexed, boosting their search engine visibility.

Crawl Budget Management and Optimization

Here are some strategies to manage and optimize your crawl budget effectively:

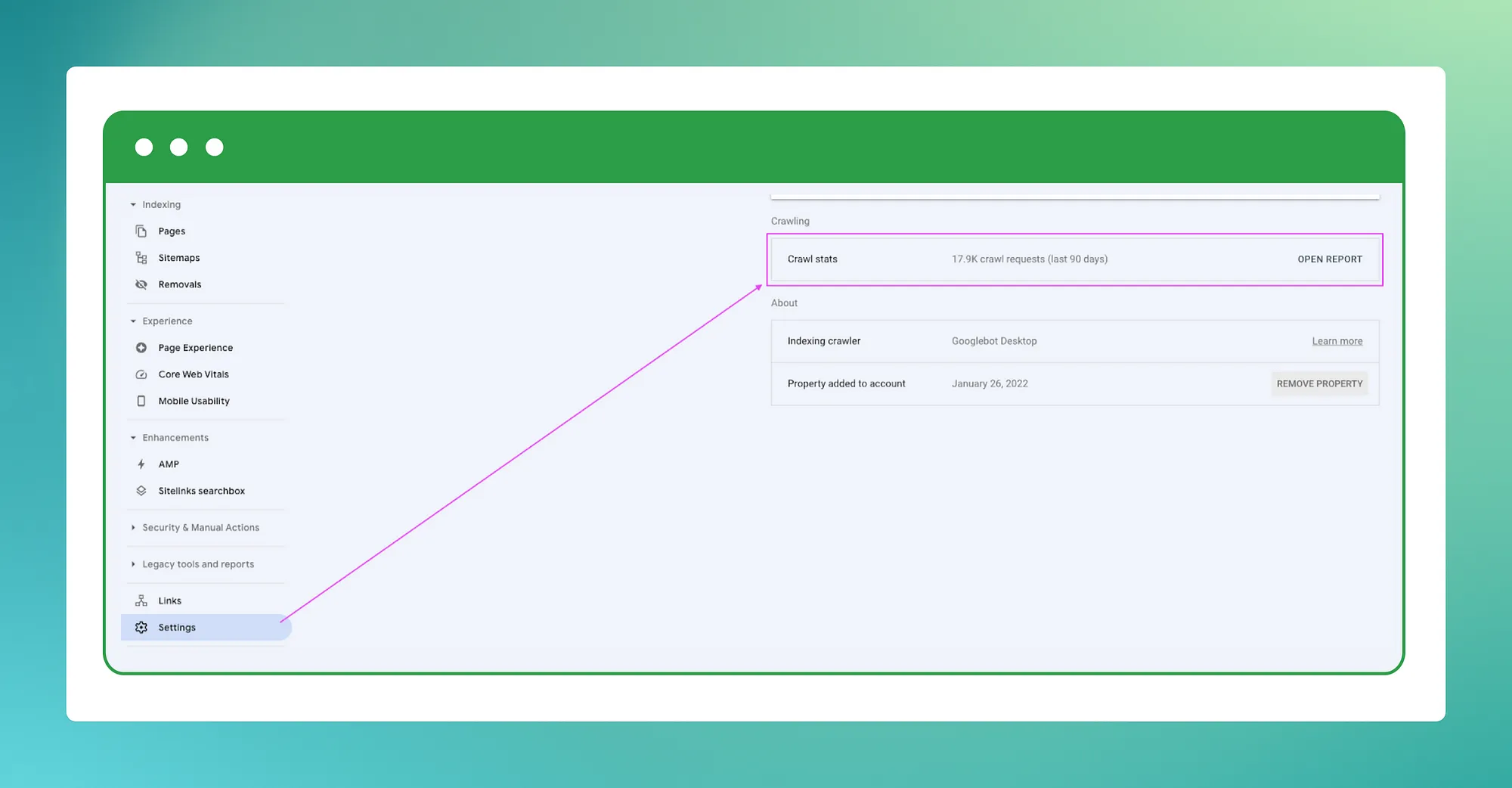

- Monitor crawl stats: Regularly check your site’s crawl stats in Google Search Console to understand Googlebot’s crawling pattern.

- Improve site speed: Enhancing site speed promotes efficient crawling.

- Streamline site structure: A well-organized site aids Googlebot in finding and indexing new pages.

- Minimize redirects: Excessive redirects can deplete your crawl budget.

- Manage URL parameters: Avoid creating duplicate URLs for the same content with too many URL parameter combinations.

- Eliminate 404 and 410 error pages: These error pages can unnecessarily consume your crawl budget.

- Prioritize key pages: Make sure Googlebot can easily access your most important pages.

- Update your XML sitemap regularly: This helps Google discover new pages faster.

- Increase page popularity: Pages with more visits are crawled more frequently.

- Utilize canonical tags: These tags help prevent duplicate content issues.

Image source: Prerender.

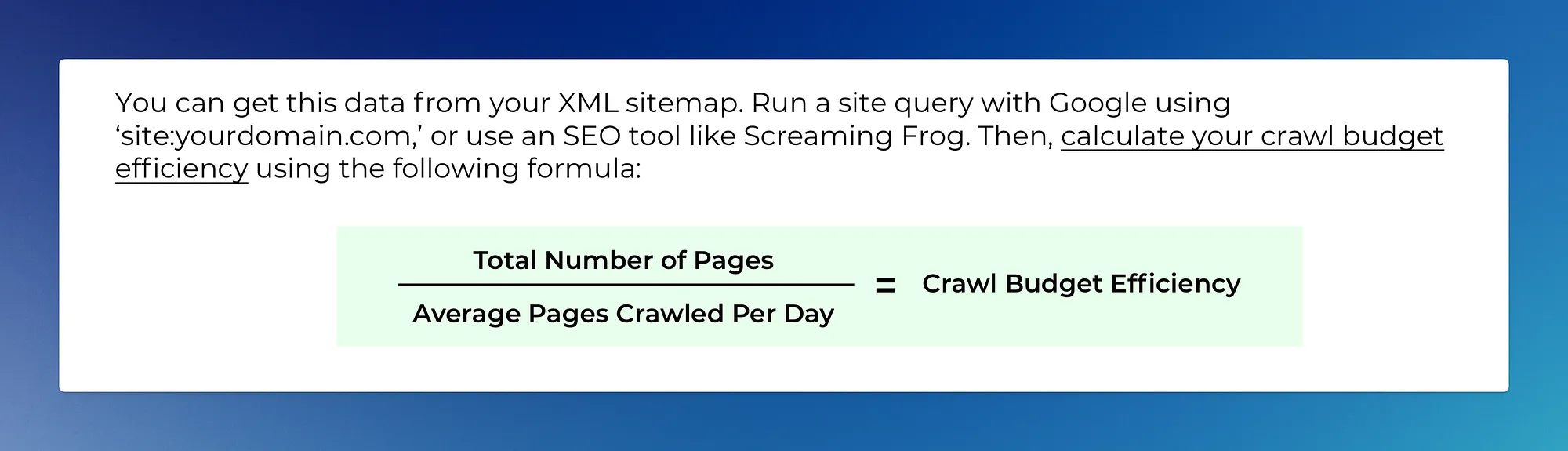

To further optimize your crawl budget, follow these steps:

- Navigate to “Settings” -> “Crawl stats” and note the average pages crawled per day.

- Divide your total page count by this number.

- If the result exceeds ~10 (indicating you have 10x more pages than what’s crawled daily), consider optimizing your crawl budget.

Noindex and Crawl Budget

Noindex is a directive to tell search engines not to index a particular page.

This can be a powerful tool for optimizing your crawl budget. Here’s how:

- Better crawl budget allocation: By using

noindexon less important or low-value pages, you can effectively guide search engine bots to focus their efforts on crawling and indexing your main, high-value content. This ensures that your crawl budget is spent where it matters most. - Avoid duplicate content: Duplicate content can drain your crawl budget as search engines might crawl the same content multiple times. Using

noindexon duplicate pages can prevent this, preserving your crawl budget. - High ‘noindex’ to indexable URL ratio: While a high ratio of ‘noindex’ to indexable URLs doesn’t usually affect how Google crawls your site, it could become a problem if many noindexed pages need to be crawled to reach a few indexable ones. In such cases,

noindexcan help ensure that crawl budget is not wasted on pages that won’t be indexed.

JavaScript and SEO

JavaScript enables dynamic web content, but it can complicate traditional web crawling.

Image source: Prerender.

If JavaScript alters or loads content, crawlers may struggle to access or extract this data, leading to incomplete or incorrect data retrieval.

Optimizing JavaScript for SEO

Optimizing JavaScript for SEO ensures search engines can crawl, render, and index JavaScript-generated content. That’s particularly important for websites and Single Page Applications (SPAs) built with JavaScript frameworks like React, Angular, and Vue.

Here are some JavaScript SEO tips:

- Assign unique titles and snippets to your pages.

- Write search engine-friendly code.

- Use appropriate HTTP status codes.

- Prevent soft 404 errors in SPAs.

JavaScript Frameworks and SEO

JavaScript frameworks like React, Angular, and Vue.js help build complex web applications. They improve user experience and create interactive web pages.

These frameworks also enhance website performance and optimize rendering.

Using server-side rendering (SSR) or prerendering, developers can ensure search engine bots can easily access and index the content.

Other Ways to Index JavaScript Sites

There are two main ways to crawl data from websites: the traditional way and the JavaScript-enabled way.

The traditional way parses the HTML structure of web pages to get the information we want.

But, it can struggle with JavaScript-heavy websites.

JavaScript-enabled crawling solutions fix this.

They act like humans by rendering JavaScript elements, which lets them access content loaded dynamically.

These solutions can reach more, especially websites that use a lot of JavaScript.

Dynamic Rendering

Dynamic rendering is a method that provides different versions of a webpage to users and search engine bots.

When a bot visits your site, it receives a prerendered, static HTML version of the page.

This version is simpler for the bot to crawl and index, enhancing your site’s SEO.

Dynamic Rendering and SEO

Dynamic rendering boosts your site’s SEO.

It enhances the crawlability and indexability of your site, quickens page load times, and improves mobile-friendliness.

It’s especially useful for JavaScript-heavy websites, as it ensures all content is reachable by search engine bots.

Prerendering: A Solution

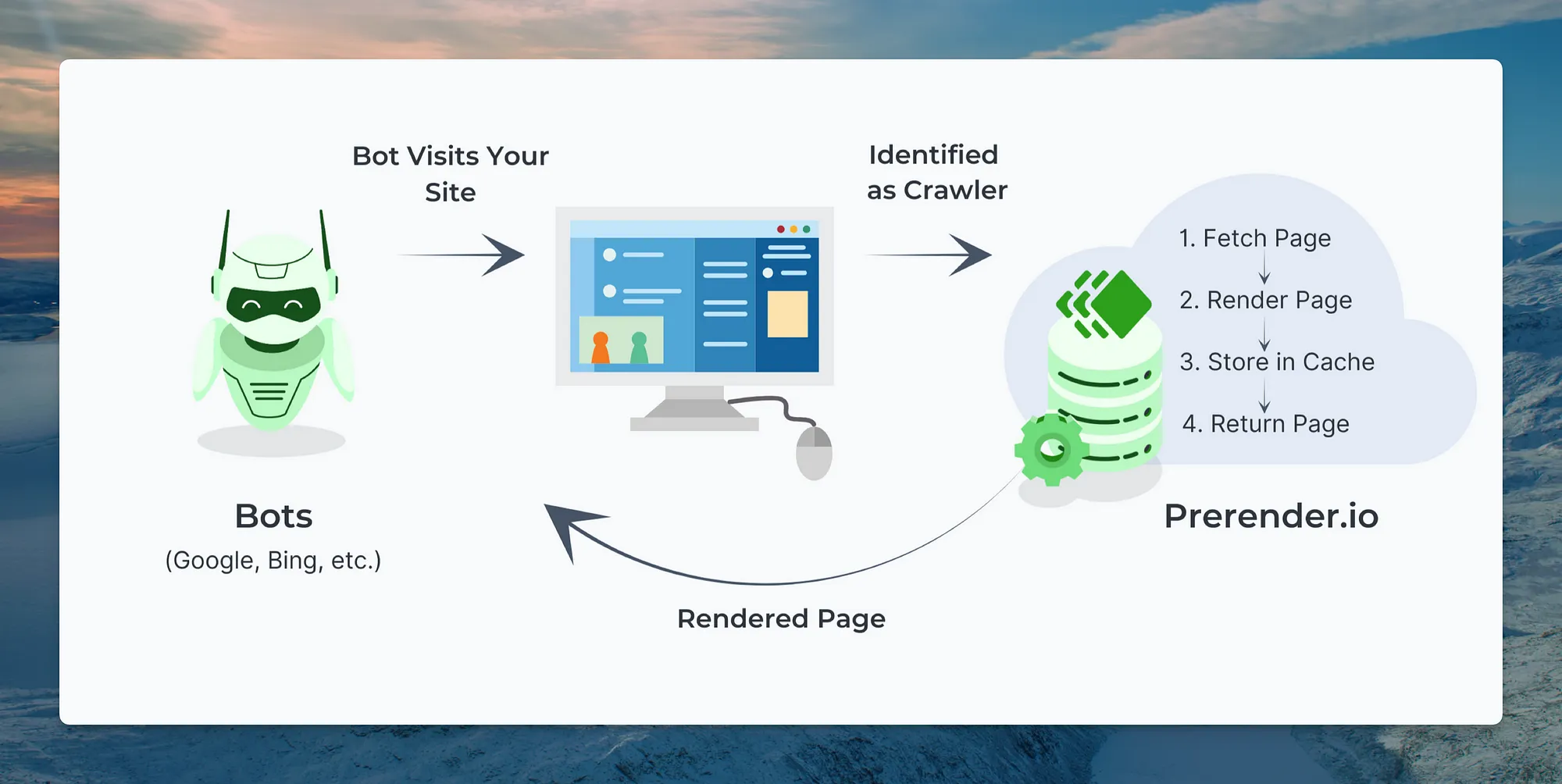

Prerendering is a form of dynamic rendering. It preloads all page elements for a web crawler.

Image source: Prerender.

When a bot visits, the prerender service provides a cached, fully rendered version of your site.

This method improves bot interactions.

Why Use Prerendering?

Prerendering helps SEO in several ways:

- Improves crawl budget and indexing: Prerendering loads all links and content together, making it easier for search engines to find every page quickly. This boosts crawl speed and efficiency.

- Speeds up indexing: Prerendering serves your pages to search engines in less than a second, improving speed and Core Web Vitals (CWV).

- Ensures no content is missed: Prerendering shows a snapshot of your content to Google’s crawlers as static content. This ensures all your text, links, and images are crawled and indexed correctly, enhancing content performance.

Dynamic Rendering vs Server-Side Rendering

Server-side rendering (SSR) and dynamic rendering are two methods used to present web content to users and search engines.

SSR involves rendering the entire page on the server before sending it to the browser.

This means all JavaScript is run on the server-side, and the user receives a fully rendered page.

It can improve performance and SEO but also put a heavier load on your server.

On the other hand, dynamic rendering provides a static HTML version of the page to search engines and a regular (client-side rendered) version to users.

This means that when a search engine bot visits your site, it receives a prerendered, static HTML version of the page, which is easier for the bot to crawl and index.

Meanwhile, users receive a version of the page that’s rendered in their browser, which can provide a more interactive experience.

Both methods have benefits.

The best choice depends on your specific needs and circumstances.

How to Implement Prerendering

To set up prerendering, you need to add suitable middleware to your backend, CDN, or web server.

- The middleware identifies a bot asking for your page and sends a request to the prerender service.

- If it’s the first request, the prerender service gets resources from your server and renders the page on its server.

- After that, the prerender service gives the cached version when it identifies a bot user-agent.

Wrapping Up

We’ve looked at crawl budget optimization and its effect on SEO.

We’ve discussed SEO challenges for JavaScript sites, best practices for JavaScript SEO, and how JavaScript frameworks affect SEO. We’ve also examined other ways to index JavaScript sites, focusing on dynamic rendering and prerendering.

To learn more about crawl budget optimization and how it can help your SEO, download Prerender’s free guide, A Technical SEO’s Guide to Crawl Budget Optimization.

Disclosure: I’m a growth consultant at Prerender.

Crawl budget optimization is often the unsung hero in the realm of SEO. In the dynamic landscape of 2024, where search engines play a pivotal role in online visibility, understanding and enhancing your site's crawl budget is paramount. Prioritizing factors like site speed, mobile friendliness, and content quality not only boosts your website's user experience but also earns favor with search engines, allowing for more efficient crawling and indexing. Keeping abreast of the latest SEO strategies ensures that your website remains a contender in the digital arena, harnessing the power of crawl budget to its fullest potential.

The app encourages active participation through its interactive gameplay. Whether it’s cooking in the kitchen, exploring hidden secrets, or throwing a party, every location offers opportunities for imaginative play.

Very helpful article! Thank you!

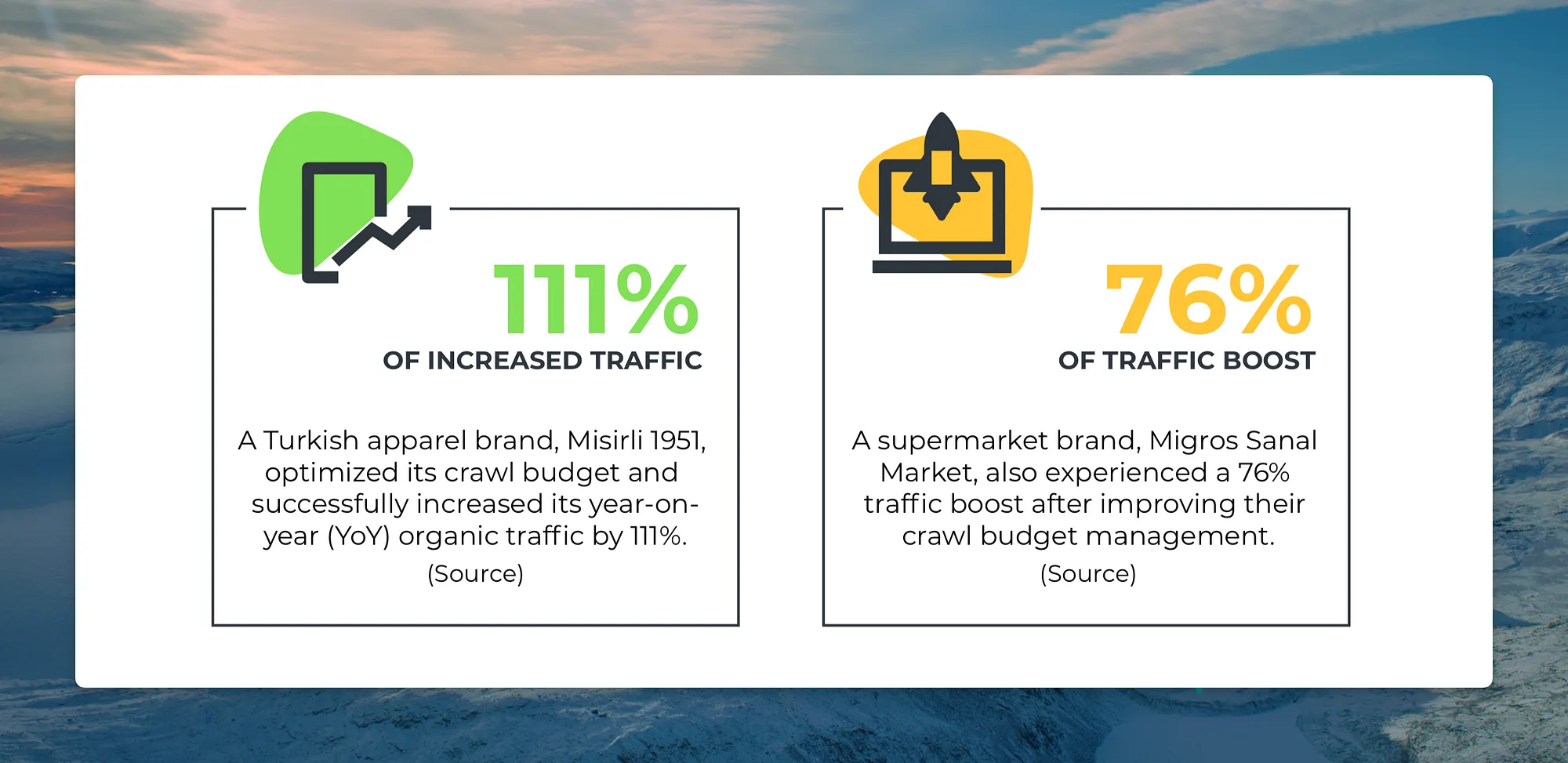

Crawl budget is a crucial concept in the field of Search Engine Optimization (SEO) that directly impacts how search engines index and rank your website. It refers to the number of pages search engines allocate to crawl on your site within a given time frame. Here's why crawl budget matters for SEO:

Indexing Efficiency:

Search engines have a limited amount of resources to crawl and index web pages. If your website has a larger crawl budget, search engines can index more of your content, increasing the chances of your pages appearing in search results.

Faster Updates:

With a higher crawl budget, search engines can more quickly discover and index new content or changes to existing pages on your site. This is especially important for time-sensitive information or regularly updated content.

Pro SEO here: Until anyone's website is not as big as nytimes etc, he shouldn't worry about the crawl budget. Websites with 1000 pages shouldn't care about this at all.

Hey, this tool is set to be the Big Thing in 2024. I'm sure you'll be an SEO rockstar if you can get ahead of the pack!